TensorBoard 数据形式

数据类型

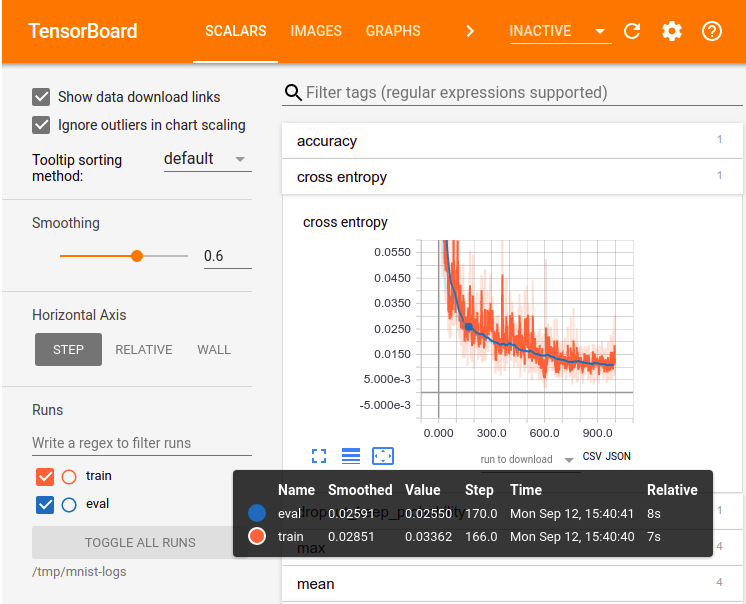

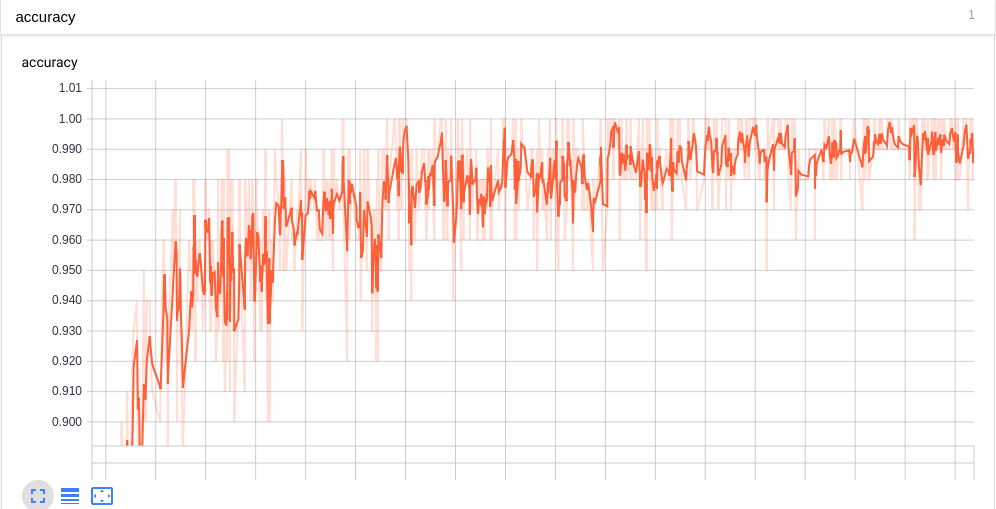

1: 标量Scalars

2: 图片Images

3: 音频Audio

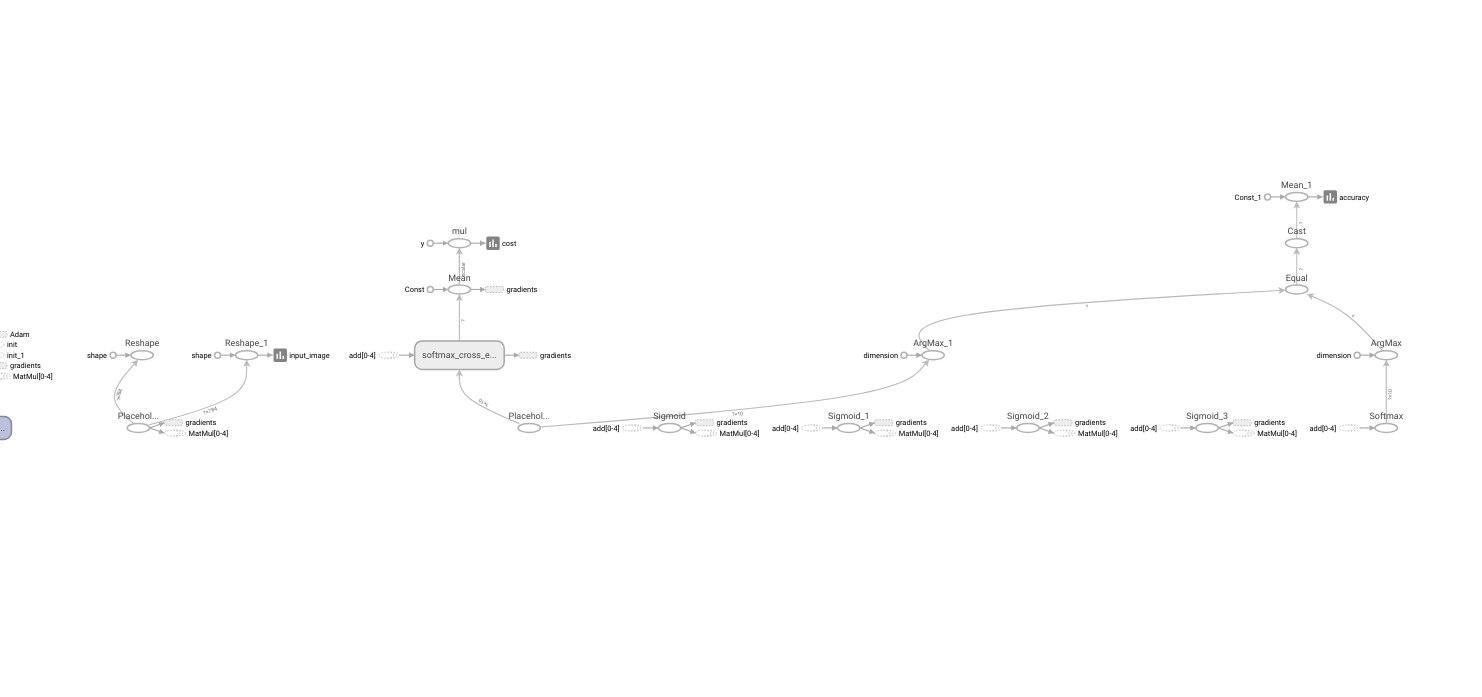

4: 计算图Graph

5: 数据分布Distribution

6: 直方图Histogtams

7: 嵌入向量Embeddings

添加数据代码

import tensorflow as tf

tf.summary.scalar(name, scalar)

tf.summary.image(name, image)

tf.summary.histogram(name, histogram)

tf.summary.audio(name, audio)

tf.summary.distribution(name, distribution)

tf.summary.graph(name, graph)

tf.summary.embeddings(name, embedding)TensorBoard 可视化过程

- 建立计算图graph, 你可以从改图中得到你想要的数据信息

- 在计算图的结点防止summary operation用来记录信息

- 运行summary operation, 只是建立了summary operation而不run, operation并不会真正执行. 有时, 我们有很多summary operation需要运行, 但是一个一个run会很麻烦. 这时, 可以利用tf.summary.merge_all()将所有结点合并成一个结点, 运行这个结点, 就可以产生所有设置的summary Data.

- 将数据保存得到本地磁盘, tf.summary.FileWriter(logs_path, graph=my_graph)

- 运行整个程序,并在命令行输入运行tensorboard的指令,之后打开web端可查看可视化的结果.

Python代码

该demo使用了tensorflow提供的手写体识别数据

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

logs_path = "five_layers_log"

batch_size = 100

learning_rate = 0.003

training_epochs = 10

mnist = input_data.read_data_sets("../data", one_hot=True)

x = tf.placeholder(tf.float32, [None, 784])

y = tf.placeholder(tf.float32, [None, 10])

# five layers neural network

first_layer = 200

second_layer = 100

third_layer = 60

fourth_layer = 30

fifth_layer = 10

# weight and bias

W1 = tf.Variable(tf.truncated_normal([784, first_layer], stddev=0.1))

B1 = tf.Variable(tf.zeros([first_layer]))

W2 = tf.Variable(tf.truncated_normal([first_layer, second_layer], stddev=0.1))

B2 = tf.Variable(tf.zeros([second_layer]))

W3 = tf.Variable(tf.truncated_normal([second_layer, third_layer], stddev=0.1))

B3 = tf.Variable(tf.zeros([third_layer]))

W4 = tf.Variable(tf.truncated_normal([third_layer, fourth_layer], stddev=0.1))

B4 = tf.Variable(tf.zeros([fourth_layer]))

W5 = tf.Variable(tf.truncated_normal([fourth_layer, fifth_layer], stddev=0.1))

B5 = tf.Variable(tf.zeros([fifth_layer]))

XX = tf.reshape(x, [-1, 784])

Y1 = tf.nn.sigmoid(tf.matmul(x, W1) + B1)

Y2 = tf.nn.sigmoid(tf.matmul(Y1, W2) + B2)

Y3 = tf.nn.sigmoid(tf.matmul(Y2, W3) + B3)

Y4 = tf.nn.sigmoid(tf.matmul(Y3, W4) + B4)

Ylogits = tf.matmul(Y4, W5) + B5

Y = tf.nn.softmax(Ylogits)

cross_entropy = tf.nn.softmax_cross_entropy_with_logits(logits=Ylogits, labels=y)

cross_entropy = tf.reduce_mean(cross_entropy)*100

correct_prediction = tf.equal(tf.argmax(Y, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

train_step = tf.train.AdamOptimizer(learning_rate).minimize(cross_entropy)

tf.summary.scalar("cost", cross_entropy)

tf.summary.scalar("accuracy", accuracy)

tf.summary.image("input_image", tf.reshape(x, [-1, 28, 28, 1]), 10)

summary_op = tf.summary.merge_all()

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

writer = tf.summary.FileWriter(logs_path, graph=tf.get_default_graph())

for epoch in range(training_epochs):

batch_count = int(mnist.train.num_examples/batch_size)

for i in range(batch_count):

batch_x, batch_y = mnist.train.next_batch(batch_size)

_, summary = sess.run([train_step, summary_op], feed_dict={x: batch_x, y: batch_y})

writer.add_summary(summary, epoch*batch_count+1)

print("Epoch", epoch)

print("Accuracy", accuracy.eval(feed_dict={x: mnist.test.images, y: mnist.test.labels}))

print("Done")

查看结果

# 运行Python程序

$ python3 hw_recognition.py # python 文件名

# 查看tensorboard

$ tensorboard --logdir='five_layers_log' # 程序中保存的数据路径Web端TensorBoard

用浏览器打开网址 http://127.0.1.1:6006 查看. 下面是部分截图.

- scalar

- image

- graph